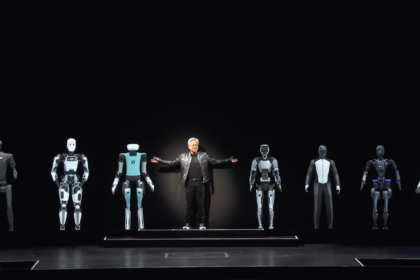

SK hynix has announced the start of mass production for its new SOCAMM2 memory modules, offering capacities of up to 192GB. The modules are designed for next-generation data centers powered by upcoming processors and accelerators from NVIDIA, including its Vera CPUs and Rubin GPUs.

The development marks a significant step in memory technology aimed at supporting increasingly demanding artificial intelligence workloads.

SOCAMM2, short for Server Optimized CAMM2, is an evolution of the CAMM2 format previously introduced in high-end laptops. Unlike traditional DIMM memory, which is installed vertically on motherboards, SOCAMM2 adopts a horizontal layout that connects directly to the PCB.

This design reduces latency and allows for greater memory density within limited physical space, a key requirement in modern data centers. The format also improves airflow, helping address thermal challenges associated with high-performance computing environments.

Higher Density to Meet AI Demands

With 192GB per module, SK hynix is pushing memory capacity to levels rarely seen outside of specialised solutions such as high bandwidth memory. The increased density is intended to support large-scale AI models, including advanced language systems that require vast amounts of memory to operate efficiently.

The rollout is closely aligned with NVIDIA’s upcoming hardware platforms, as its Vera and Rubin architectures are expected to rely heavily on high-capacity memory to meet growing demand from enterprise and cloud customers.

SK hynix is not alone in this segment. Samsung Electronics and Micron Technology are also developing SOCAMM2 solutions tailored for AI servers.

While SK hynix and Samsung are offering modules with capacities up to 192GB, Micron is reportedly advancing even further, with modules reaching 256GB. The competition reflects the rapid pace of innovation driven by demand from major technology companies.